User Stories: An Agile Introduction. User stories are one of the primary development artifacts for Scrum and Extreme Programming (XP) project teams. A user story is a very high-level definition of a requirement, containing just enough information so that the developers can produce a reasonable estimate of the effort to implement it.

Lets take an example suppose we got 5 stories A,B and C,D,E.

The stories are ordered based on their importance (priority). How do you estimate them? Is it estimated based on the size of the feature? For example I have given them estimate values:

Let's suppose it is a 2-week sprint. This is 14days time size=5,14x5=70 man-days.Now what does the value 10 mean? Does it mean amount of time (hrs or days) a team should spend? And what are story points? Suppose this is the first sprint; how will you estimate the number of sprints when you don't have the last sprint's velocity?

closed as primarily opinion-based by Vadim Kotov, gunr2171, Psi, Void Ray, Sudhir JonathanNov 3 '17 at 18:50

Many good questions generate some degree of opinion based on expert experience, but answers to this question will tend to be almost entirely based on opinions, rather than facts, references, or specific expertise. If this question can be reworded to fit the rules in the help center, please edit the question.

6 Answers

Argh! Serves me right for writing from memory.

A story point is related to the estimate of course, and when you try to figure out how much you can do for a sprint, a story point is one unit of 'work' needed to implement part of or a whole feature. One story point could be a day, or an hour, or something in between. I've confused the 'estimate' and 'story point' below, don't know what I was thinking.

What I originally wrote was 'estimates' and 'story points'. What I meant to write (and edited below) was 'story points' and 'velocity'.

Story points and velocity goes hand in hand, and they work together to try to give you a sense of 'how much can we complete in a given period of time'.

Let's take an example.

Let's say you want to estimate features in hours, so a feature that has an estimate of 4 will take 4 hours to complete, by one person, so you assign such an estimate to all the features. You thus consider that feature, or its 'story', worth 4 points when it comes to competing for resources.

Now let's also say you have 4 people on your project, each working a normal 40-hour week, but, due to other things happening around them, like support, talking to marketing, meetings, etc., each person will only be able to work 75% on the actual features, the other 25% will be used on those other tasks.

So each person has 30 hours available each week, which gives you 30*4 = 120 hours total for that week when you count all the 4 people.

Now let's also say you're trying to create a sprint of 3 weeks, which means you have 3*120 hours worth of work you can complete. This is your velocity, how fast you're moving, how many 'story points' you can complete.

The unit of your velocity has to he compatible with the unit for your story points. You can't measure stories in 'how many cups will the developer(s) consume while implementing this' with 'how many hours do we have available'.

You then try to find a set of features that together takes close to, but not over, 120 points, ranked by their priority. This would simply be to sum accumulative from the top and downwards until you reach a task that tips the sum over, or equal to, those 120 points. If it tipped it over, don't include the task.

You could just as easily estimate in days, or cups of coffee consumed by the developer, just as the number is representative for the type of job you're doing, and it can be related to the actual work you will perform (ie. how much time you have available).

You should also evaluate your workload after each sprint to figure out if that 75% number is accurate. For instance, if you only managed half of what you set out to do, figure out if your feature estimates was wrong, or if your workload estimates was wrong. Then take what you've learned into account when estimating and planning for the following sprints.

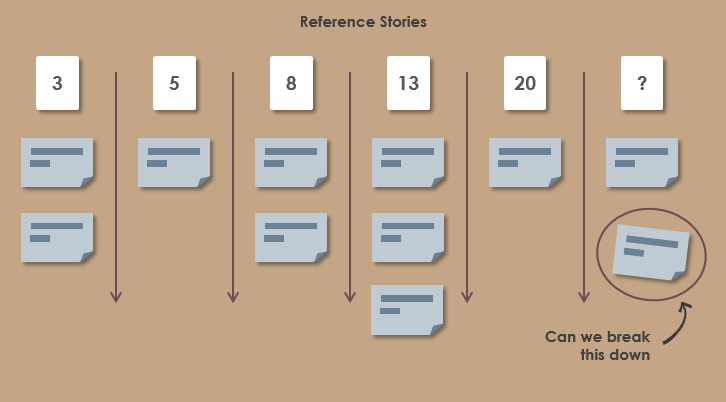

Also note that features should be split up if they become too big. The main reason for this is that bigger estimates have a lot more uncertainty built into them, and you can mitigate that by splitting it up into sub-features and estimating those. The big overall feature then becomes the sum of all the sub-features. It might also give you the ability to split the feature over several people, by assigning different sub-features to different people.

A good rule of thumb is that features that have an estimate over 1 days should probably be split.*

Remember that Points are just ROMs(rough order of magnitude) established through the use of 'Planning Poker' as a common practice. The first few Sprints are when you start to identify what the points mean to the team and the longer you go the more accurate the team gets.

Plus look to use points that are a bit more spaced out. A practice I've seen and used is to use the fibonacci sequence, it makes sure that you don't have too many 1 point differences.

Also don't forget testers, when pointing a story anyone doing testing needs to weigh in as sometimes a simple development task can cause a large testing effort and if they are true Sprints the idea is to have everything completed as it could be shipped (built, tested and documented). So the estimate of a story is determined by the team not by an individual.

The value 10 is merely a value relative to the other estimates, e.g. it is half as hard as a 20 or slightly more difficult than a 9. There isn't a specific translation of 1 point = x hours of effort is something to point out.

Where I work, we have what we call 'epic points' which is how hard is some high level story,e.g. integrate Search into a new website, that will consist of multiple stories to complete and then we estimate hours on each story that is created from breaking down each epic,e.g. just put Search in for support documents on the site. The 'epic points' are distributed in a variation of Fibonacci numbers(1,2,3,5,8,13,21,28,35) so that broader, more vague epics merely get a large value, e.g. anything greater than 8, is an indicator that it can be broken down into more easily estimatable stories. It is also worth noting here that where I work we only work 5 days a week and within each sprint a day is lost to meetings like the demo, iteration planning meeting, retrospective and review so there is only 9 days to a sprint. Adding in pair programming for some things, time for fixing bugs and other non-project work like support tickets and it becomes rather hard to say how many hours will be spent by the handful of developers in the sprint.

The first few sprints are where the values start to become more concrete as based on the experience gained, the estimates can become clearer in terms of how to guess the value.

With a new team or project we always start out by assuming a story point is a single 'ideal day', and we figure each developer getting around 3.5 ideal days per week, which is how we calculate our likely initial velocity.

Once you've gone through the 'planning poker' stage and balanced/compared all your stories, the actual real-world duration of a story point is really unknown - all you really have is a pretty good idea of relative duration, and use your best judgement to come up with a likely velocity.

At least, that's how I do it!

If you are also aiming your story points at being roughly equal to an ideal day, then I'd suggest breaking your stories down into smaller stories, otherwise you're not going to have a good time in planning and tracking iterations.

Good answers all around.

One point I would like to add is that it's not really important what you choose as a base for your points value (hours, ideal days, whatever else). The important is to keep it consistent.

If you do keep it consistent it will allow you to discover 'true velocity' of your team.For example lets say you had few iterations:

And now you are starting iteration 4 and you have the following in the backlog (sorted on priority):

Now assuming your points estimations are consistent you can be reasonably sure that the team will finish items 1,2 and probably 3 but definitely not 4.

You can apply the same to release backlog to improve your prediction of release date.This is what allows Scrum teams to improve their estimations as they go along.

JB King has the best answer, but no votes which means incorrect information is being propagated and contributing to the generally poor interpretation of scrum. Please see the real answers from one of the people who designed Scrum here:

Remember, it's about effort, not complexity.

Now read about and watch a video here:

Not the answer you're looking for? Browse other questions tagged agilescrumiterationstoryboardsprint or ask your own question.

When estimating the relative size of user stories in agile software development the members of the team are supposed to estimate the size of a user story as being 1, 2, 3, 5, 8, 13, ... . So the estimated values should resemble the Fibonacci series. But I wonder, why?

The description of http://en.wikipedia.org/wiki/Planning_poker on Wikipedia holds the mysterious sentence:

The reason for using the Fibonacci sequence is to reflect the inherent uncertainty in estimating larger items.

But why should there be inherent uncertainty in larger items? Isn't the uncertainty higher, if we make fewer measurement, meaning if fewer people estimate the same story? And even if the uncertainty is higher in larger stories, why does that imply the use of the Fibonacci sequence? Is there a mathematical or statistical reason for it?Otherwise using the Fibonacci series for estimation feels like CargoCult science to me.

closed as off-topic by devnull, Adriano Repetti, RobV, Serge Ballesta, Малъ СкрылевъJul 22 '14 at 12:53

- This question does not appear to be about programming within the scope defined in the help center.

6 Answers

The Fibonacci series is just one example of an exponential estimation scale. The reason an exponential scale is used comes from Information Theory.

The information that we obtain out of estimation grows much slower than the precision of estimation. In fact it grows as a logarithmic function. This is the reason for the higher uncertainty for larger items.

Determining the most optimal base of the exponential scale (normalization) is difficult in practise. The base corresponding to the Fibonacci scale may or may not be optimal.

Here is a more detailed explanation of the mathematical justification: http://www.yakyma.com/2012/05/why-progressive-estimation-scale-is-so.html

Out of the first six numbers of the Fibonacci sequence, four are prime. This limits the possibilities to break down a task equally into smaller tasks to have multiple people work on it in parallel. Doing so could lead to the misconception that the speed of a task could scale proportionally with the number of people working on it. The 2^n series is most vulnerable to such a problem. The Fibonacci sequence in fact forces one to re-estimate the smaller tasks one by one.

According to this agile blog

'because they grow at about the same rate at which we humans can perceive meaningful changes in magnitude.'

Yeah right. I think it's because they add an air of legitimacy (Fibonacci! math!) to what is in essence a very high-level, early-stage sizing (not scoping) exercise (which does have value).

But you can get the same results using t-shirt sizing...

You definitely want something exponential, so that you can express any quantity of time with a constant relative error. The precision of your estimation as well is very likely to be proportional to your estimation.

So you want something : a) with integers b) exponential c) easy

Now why Fibonacci instead of, 1 2 4 8?My guess is that it's because fibonacci grows slower. It's in goldratio^n, and goldratio=1.61...

The Fibonacci sequence is just one of several that are used in project planning poker.

It is difficult to accurately estimate large units of work and it is easy to get bogged down in hours vs days discussions if your numbers are too 'realistic'.

I like the explanation at http://www.agilelearninglabs.com/2009/06/story-sizing-a-better-start-than-planning-poker/, namely the Fibonacci series represents a set of numbers that we can intuitively distinguish between them as different magnitudes.

I use Fibonacci for a couple of reasons:

- As task gets larger the details become more difficult to grasp

- Task estimate is the number of hours for anyone in the team to complete the task

- Not everyone in the team will have the same amount of experience fora particular task so that adds to the uncertainty too

- Human gets fatigue over larger and potentially more complex task.While a task twice as complex is solved in double time for a computerit may take quite a bit more for a developer.

As we adds up all the uncertainties we are less sure of what the hours actually should be. It ends up easier if we can just gauge if this task is larger/smaller than another one where we gave a estimate of already. As we up the size/complexity of the task the effect of uncertainty is also amplified. I would be happily taking an estimate of 13 hours for a task that seems twice as large as one I've previously estimated at 5 hours.